27 January 2023

By Catalina Grosz and JingKai Ong

Share

Here’s how ChatGPT can fuel your behavioural science research

*AN: One of the following paragraphs was written by ChatGPT, can you tell which one? The answer is at the bottom of the blog!

In the past months, ChatGPT has become a household name. The AI software launched by OpenAI in November 2022 has already taken the world by storm, providing users with an easy-to-use interface in the form of a chatbot. ChatGPT can help debug code, correct grammatical mistakes, help clarify doubts and much more.

Many researchers who considered artificial intelligence as something from the faroff future are now coming to terms with how easy it is to use and how helpful it can be to tackle everyday research tasks. In a recent survey, Fishbowl found that 30% of professionals have already used ChatGPT for work (Constantz, 2023). This new software has been described as the “calculator for writing” (Aldrick, 2023).

Just as a calculator requires human input to return solutions, ChatGPT requires an influx of information it can learn from in order to provide users with answers and suggestions. This means that if the information ChatGPT uses to learn is inaccurate, the output it provides users will be inaccurate too. It is therefore unsurprising that researchers would disapprove of ChatGPT being listed as a co-author on a journal article (Mellon, 2022). Yet, we strongly believe that ChatGPT is a good tool to add to your research toolbox. Here are five ways we found ChatGPT will be able to lend you a hand during your research.

1- Coding and analysing open-ended survey responses.

It is common to use open-ended questions in surveys to gather additional understanding about stated attitudes and behaviours. This type of question allows respondents to express themselves and can be a great way for researchers to gain a deeper understanding on a topic. However, coding and analysing open-ended responses is an burdensome job, requiring substantial time and expertise in qualitative analysis methods. In comes ChatGPT. By simply asking the chatbot to look for repeated themes and to organise the text accordingly, researchers can cut the job in half. ChatGPT can also help to uncover underlying themes in interview transcripts and categorise them.

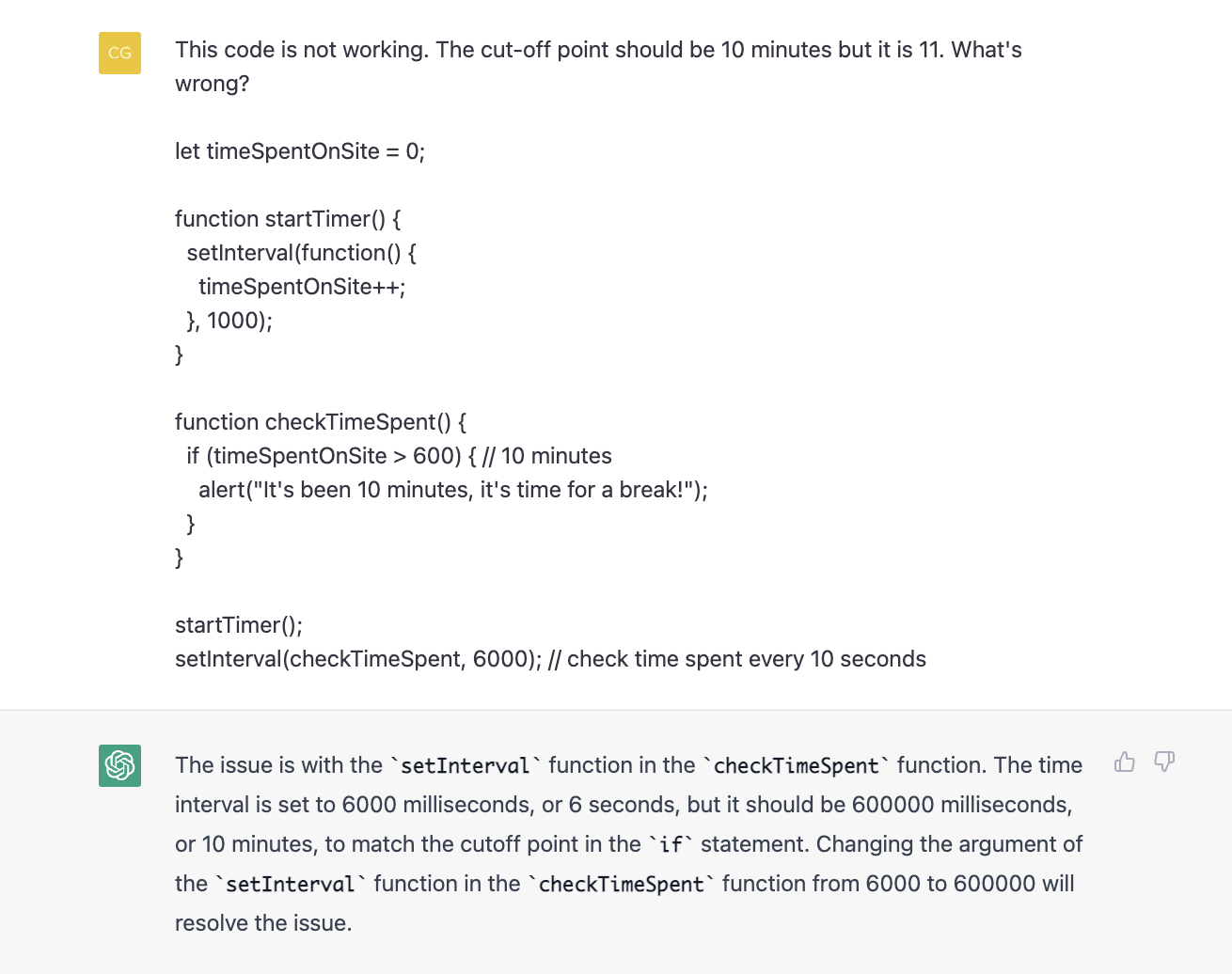

2- Troubleshooting and improving code.

Not only can writing code take a long time, but also troubleshooting for bugs is additionally burdensome. This process can be simplified by asking ChatGPT to go through the code. By inputting the code and a little background information about what it is supposed to do when run, ChatGPT can help researchers find and correct the source of the error. If the chatbot does not fully understand, it will ask the user clarifying questions until it can provide a sufficient answer. Below is an example of ChatGPT helping to debug JavaScript code meant to generate a website that sends out an alert when the user has spent ten minutes on it.

3- Improving writing.

ChatGPT can assist researchers by checking their writing for grammatical errors and providing suggestions for improvement. It can also help researchers with their sentence structure and wording. Researchers can use ChatGPT to proofread and edit their written work, which can help them to produce more polished and professional documents. Additionally, ChatGPT's ability to understand and respond to natural language input allows researchers to easily ask for clarification or information on specific topics, which can aid in their research and writing process. Overall, ChatGPT can be a useful tool for researchers, helping them to communicate their findings more effectively and efficiently.

4- Brainstorming machine.

Writers' block is a serious ailment amongst researchers. For those days when ideas are lacking, consider a chat with ChatGPT. As the name suggests, this chatbot makes great conversation, replying instantly with a human-like address that will make the best of us wonder if there is really a human behind the machine. Using ChatGPT as a sounding board for ideas will allow the researcher to access new information they may not have considered. And if the user has any doubt about how to approach a writing task, they can simply ask ChatGPT to draft a paragraph or two, which can later be used as a guide for their own work.

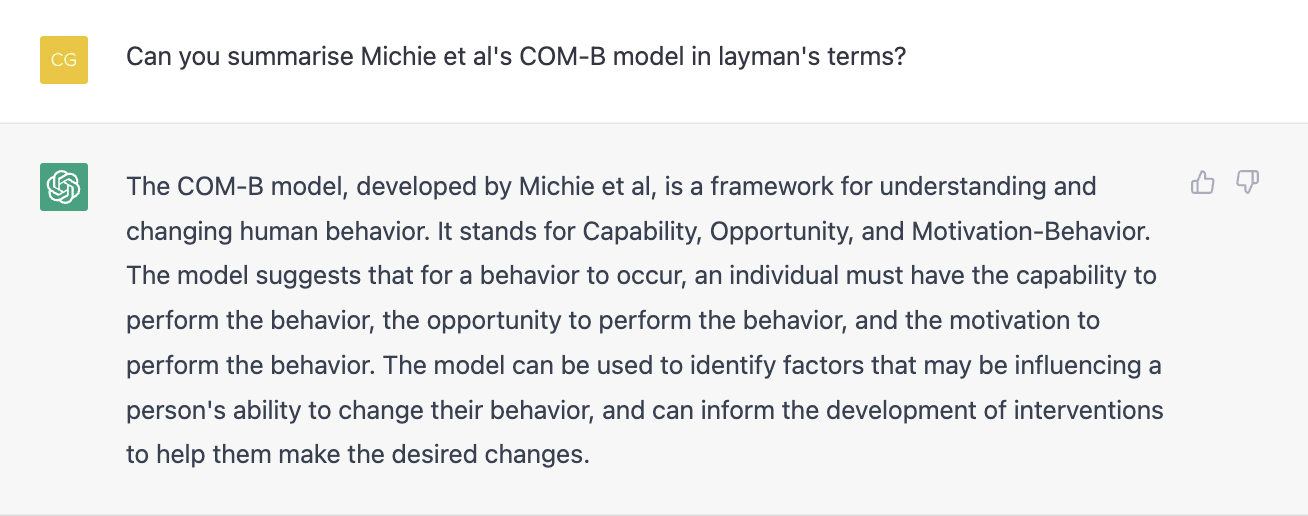

5- TL;DR: Learning resource.

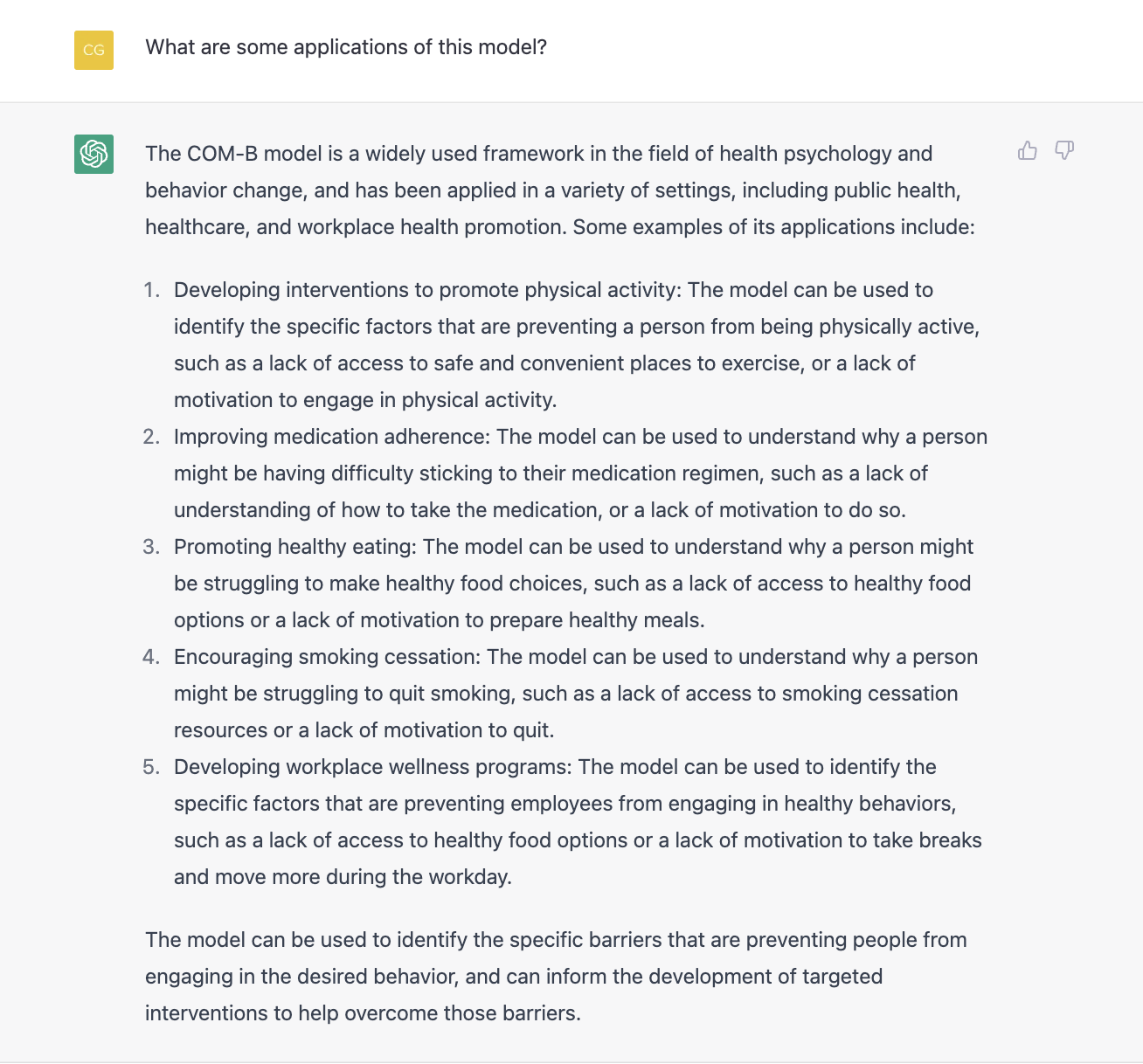

The biggest task when it comes to research writing has to be the literature review. Going through journal article after journal article, paper after paper, can take many hours of hard work. That is if you avoid falling into a rabbit hole and chasing a reference down through various publications. Well, fret no longer, for ChatGPT’s TL;DR function can summarise large pieces of text. By simply adding the acronym for “too long; didn’t read'' (TL;DR) at the end of any text, the software returns a summary of its key points. This makes it easy for researchers to get a quick rundown of the topics covered in the text. This can additionally be used to learn new topics. Paired with the chatbot’s capacity to translate complex topics into layman’s terms, tackling the learning process becomes easier and quicker for researchers. If the user did not fully understand the response, they can ask the software clarifying questions and even ask it for examples. In the following example, ChatGPT was asked to explain the Michie et. al.’s (2011) COM-B framework in simple terms and then asked to go through applications for this model.

Just like a calculator is a tool that helps the user arrive at a solution, ChatGPT should be used as a tool that helps researchers reach their goals rather than an all-knowing pool of wisdom. At the end of the day, the AI software is just a reflection of the information it blindly collects online and is not without its flaws. Beyond the ethical considerations attached to AI, which we will go into in our next blog post on the topic of artificial intelligence, ChatGPT has been guilty of providing inaccurate information and incoherent text known as “gibberish" (Marr, 2020). In one instance, ChatGPT provided a fully coherent explanation on a non-existent phenomenon (check out the whole Twitter thread). Wrong answers can sometimes be so compelling that StackOverflow, a website where programmers can go to clarify coding doubts, has banned solutions generated by ChatGPT on the basis that even incorrect codes can look deceptively accurate (Malik, 2022). It doesn’t help that ChatGPT is currently a black-box software, meaning that it is not possible to see how the algorithm “thinks” and arrives at a conclusion (Marr, 2020). In addition, the software’s knowledge base goes no further than 2021, making it ignorant to events that have taken place since (Johnson, 2022).

Overall, as long as the researcher is wary of the chatbot’s limitations and acts accordingly by cross referencing and checking out any information they are unsure of, there should be no problem in using ChatGPT to help lift some of the weight. After all, this is only the third iteration of the chatbot, and it is likely the mistakes and inaccuracies will soon be ironed out.

Researchers are advised to buckle in, for this is only the beginning of what AI can do for us. In the words of OpenAI’s CEO, Sam Altman, “AI is going to change the world, but GPT-3 is just an early glimpse." (Altman, 2020).

In our next blog post about AI we will consider the challenges and concerns that artificial intelligence may pose for social research. Stay tuned!

Recommended reading:

- Learn more about ChatGPT and the OpenAI API on their website

- Here’s What To Know About OpenAI’s ChatGPT—What It’s Disrupting And How To Use It by Arianna Johnson

- What Is GPT-3 And Why Is It Revolutionizing Artificial Intelligence? by Bernard Marr

*AN: The paragraph titled Improving writing was written by ChatGPT using the prompt “Write a paragraph explaining how chatGPT can help researchers by checking for grammatical errors and can help them improve their writing.”