2 November 2023

Filippo Muzi-Falconi

Share

Using Innovative Experiments to Create Better Digital Environments

People invest a significant amount of their time on digital platforms – for entertainment, shopping, socialising, and much more – and our online experiences have tangible effects on our offline lives. It is therefore crucial that policymakers, charitable organisations, and advocacy groups work together to ensure that digital environments are designed in ways that safeguard and improve our wellbeing.

Careful research – ideally drawing on behavioural insights and experimentation – is needed for us to be able to identify aspects of digital platforms that harm, or could improve users’ wellbeing. However, those who are interested in changing platforms for the better often lack direct access to said platforms, which can significantly hinder this type of research.

To address this challenge, we have developed an innovative experimental methodology that allows us to test how elements of digital environments influence our wellbeing and behaviour, in addition to letting us evaluate whether different interventions might improve consumer outcomes – all without needing direct access to the actual platforms.

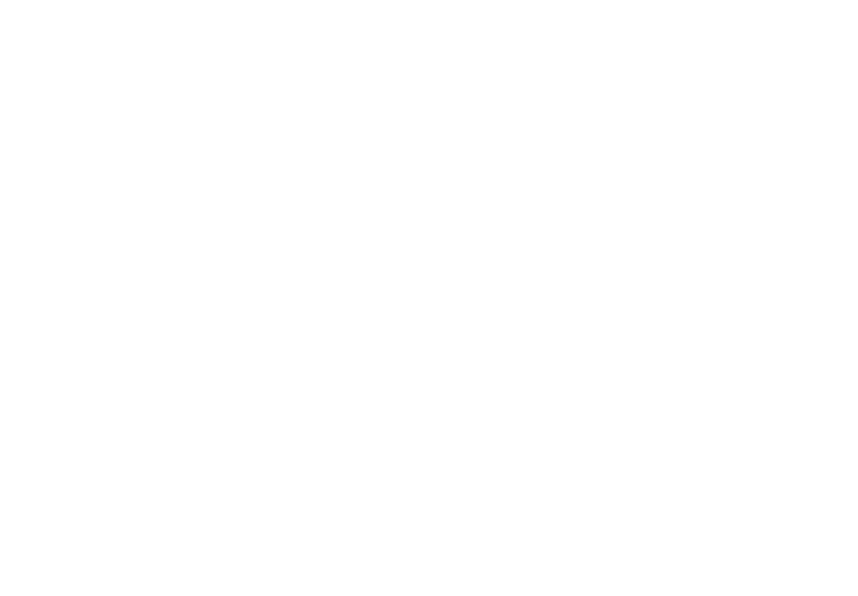

Creating highly realistic simulations of digital platforms

Our approach starts by recruiting a sample of study participants, representative of the wider population or target audience. This ensures that the results from our studies are representative and robust.

We then develop highly realistic replicas of digital platforms that match their look and feel within an online survey. Participants are initially presented with scenarios that ensure that they interact with the digital platform replica in a natural way and that the actions they make in the study reflect real-life choices.

Next, we randomly assign participants to various experimental conditions to test different interventions, such as altering digital interfaces or adding new features or products to existing platforms. Our approach is flexible as we custom code the entire study, enabling us to explore a wide range of interventions.

Replicating digital platforms within an online survey environment enables us to track participants’ actions and understand how they interact with the platform. This detailed data tells us how interventions affect participants’ behaviour. We also include survey questions to understand participants’ intentions and beliefs, such as why they make certain choices and the extent to which they expect to use certain features or products in the future.

Taken together, our methodology not only enables us to determine the effectiveness of a policy intervention but also provides insights into the reasons behind its success and practical implementation techniques for optimising its impact. Furthermore, it allows us to identify potential unintended consequences of policy interventions, such as impacts on usability and consumer satisfaction, and estimate their effects on consumer welfare.

Figure 1: Recording of desktop device setup simulation for browser choice testing (Mozilla).

Applying our methodology to tackle real-world policy challenges: examples from our past work

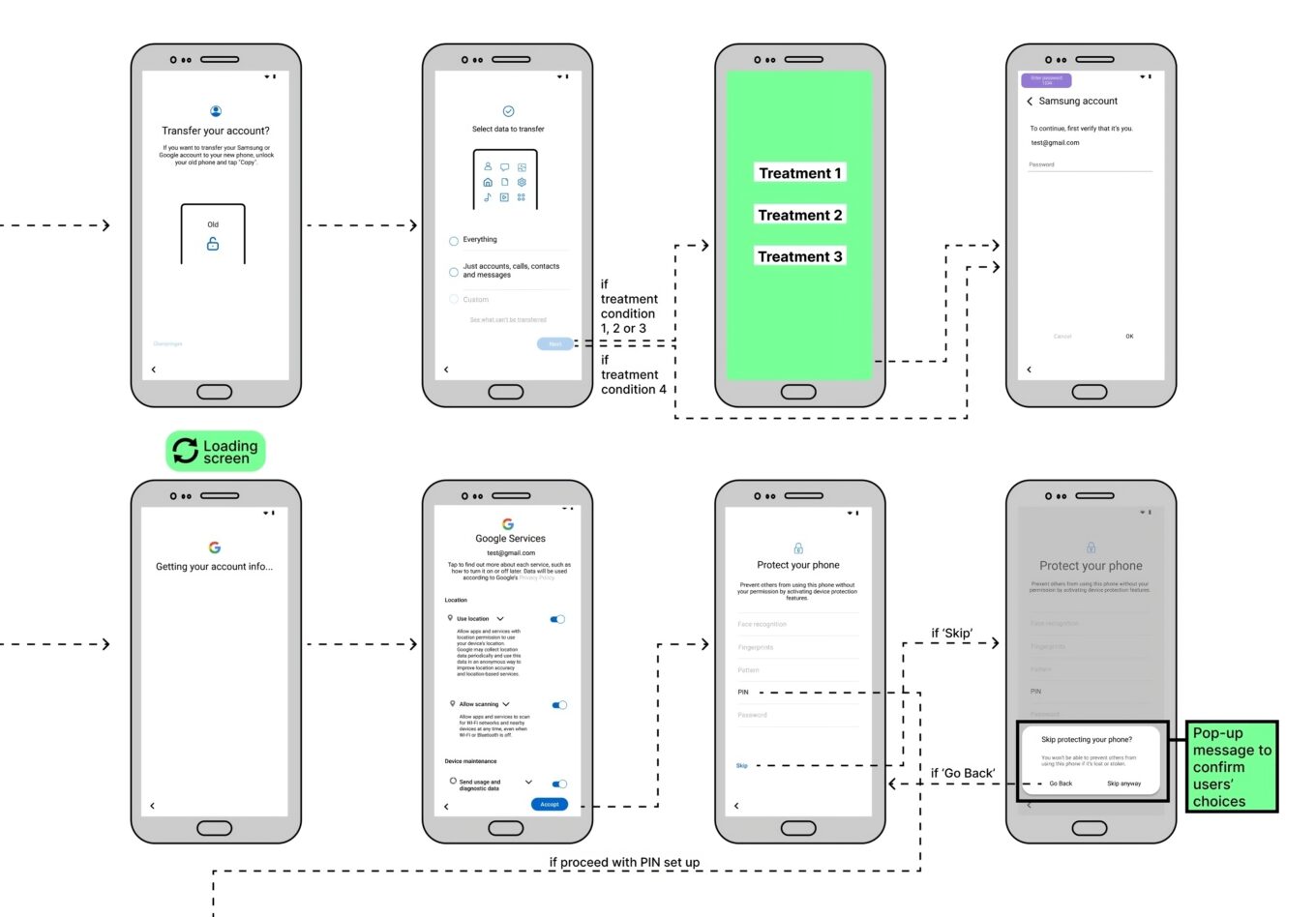

Which? - Tacking fake reviews

Online customer reviews are a valuable resource for many consumers, yet they are not always trustworthy. Fake reviews are commonly found in popular online platforms, posing potential risks for consumers who might buy poor or unsafe products. However, there is a lack of evidence on the extent to which fake reviews affect consumer behaviour and welfare.

To address this issue, we partnered with Which? to explore how fake reviews in online marketplaces impact consumer behaviour and test possible interventions to protect consumers from them.

We conducted an experiment were we simulated a portion of Amazon’s platform, asked participants to complete a shopping task, and randomly presented to a group of participants a banner informing them about fake reviews and providing them with simple tips for identifying them. The results revealed that fake reviews are highly effective at misleading consumers into choosing inferior products, more than doubling the proportion opting for "Don't Buy" items. Notably, fake reviews do not need to be sophisticated to impact consumer behaviour. Additionally, while warning banners can mitigate the effectiveness of fake reviews, they fall short of providing a complete solution, highlighting the need for platforms to take substantial measures in curbing the prevalence of fake reviews. Click here to learn more about this study.

Figure 2: Amazon platform simulation to evaluate fake review impact on consumer behaviour (Which?).

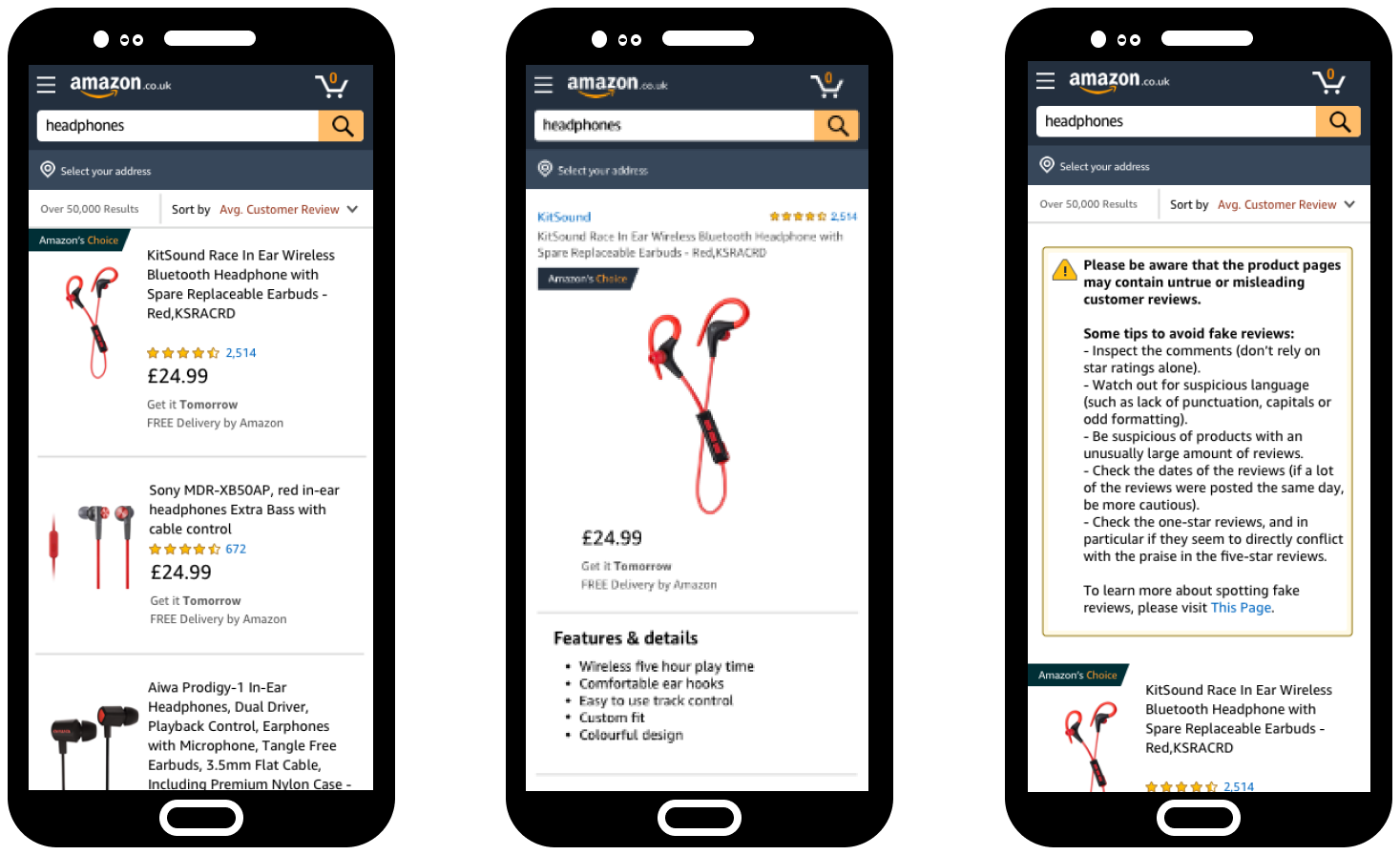

Mozilla - Fostering browser competition

We conducted a study with Mozilla to inform policies aimed at addressing deep-seated competition issues in browsers and browser engines. More specifically, the experiment sought to understand how providing users with the option to choose their preferred browser when setting up their device affects the choices they make and their levels of satisfaction.

In the experiment, we developed a highly realistic simulation of the set up process for mobile and desktop and varied the design, content and timing of browser choice screens to see how these factors influence consumer choice.

We found that consumers prefer having the option to choose their default browsers when setting up their device and that browser choice screen have the potential to effectively improve competition in the browser market. Interestingly, the inclusion of such screens extended the device setup process without overburdening users or consuming too much time, representing an all-round advantage for consumers. Click here to learn more about this study.

Figure 3: Android device setup simulation for browser choice testing (Mozilla).

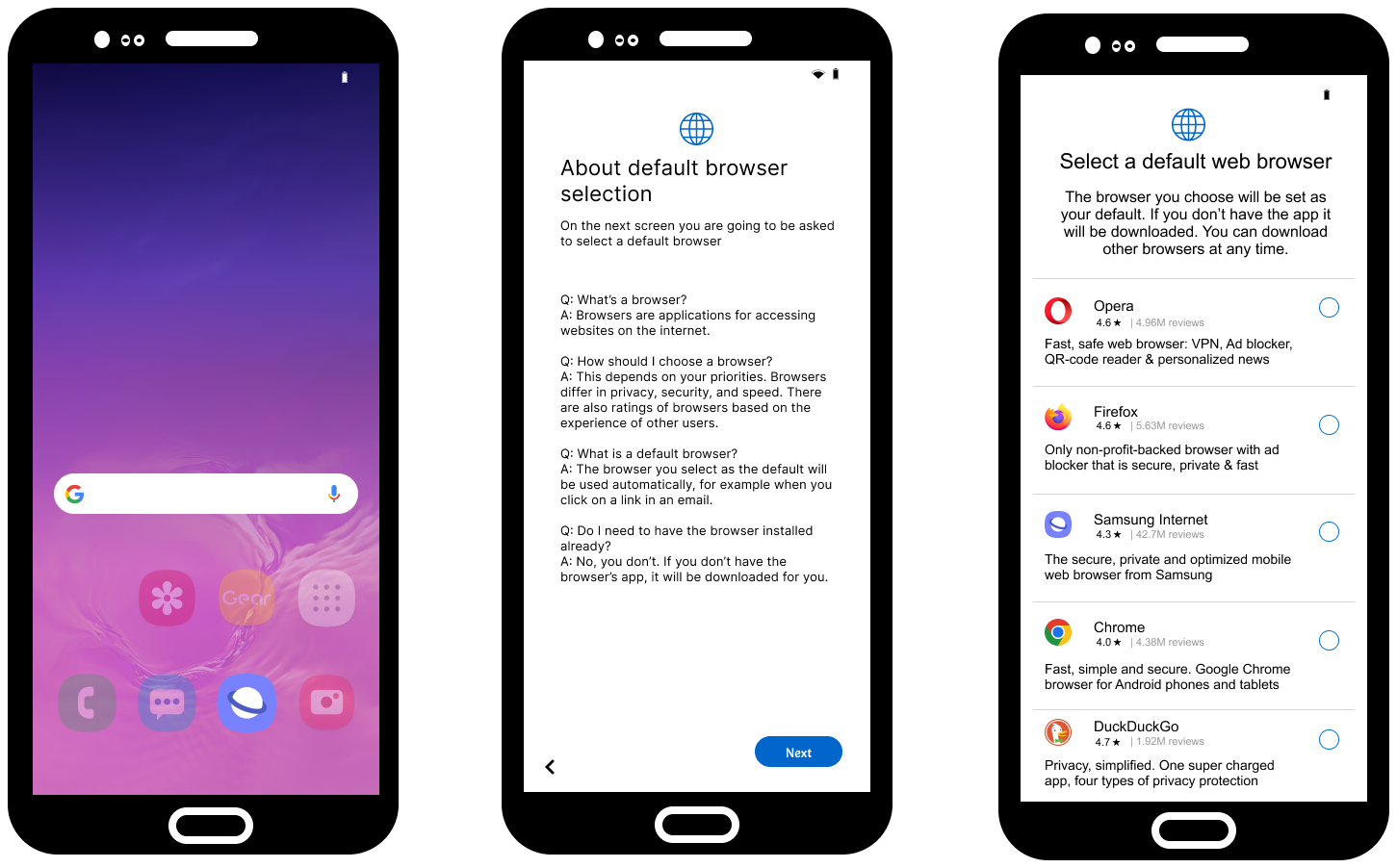

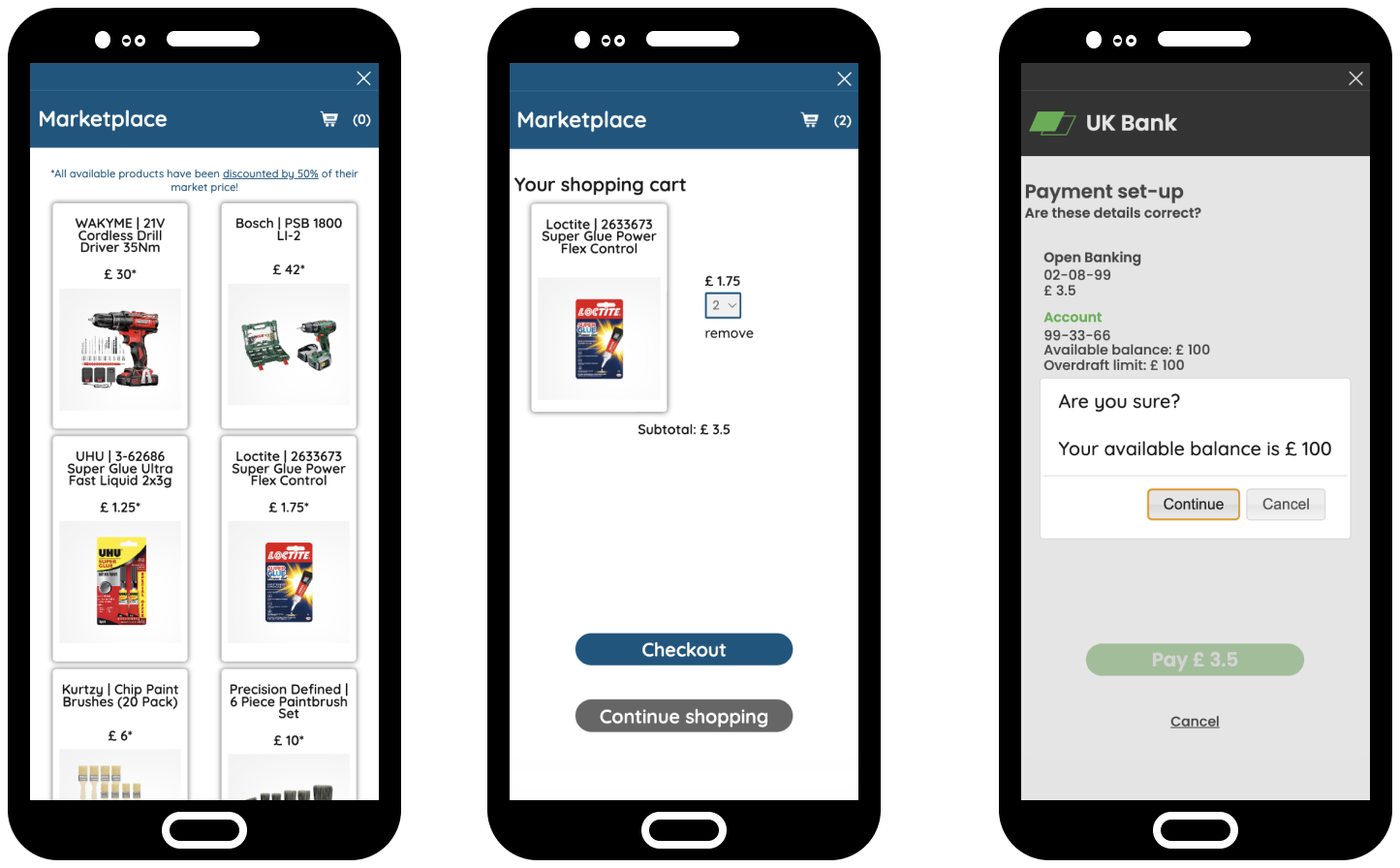

Open Banking Implementation Entity - Exploring balance statements

We partnered with The Open Banking Implementation Entity (OBIE) to explore the impact of displaying balance statements in Open Banking payment journeys. On the one hand, balance statements may help consumers make more informed decisions as they purchase goods and services. On the other hand, that may create unnecessary friction for consumers as they pay, and consequently make Open Banking a less attractive payment option.

We conducted a large-scale study with 7,500 UK adults where participants browsed an online shop replica and made purchases using a debit card or a bank app (i.e., using Open Banking). They were randomly assigned to conditions with varying balance statement designs and content to observe their effect on consumer behavior. The experiment results were combined with survey data that assessed consumer welfare, attitudes and stated preferences regarding the inclusion of balance statements in online banking apps.

Findings showed that balance statements significantly influence consumer behaviour and attitudes, but different types of balance statements had different effects. For example, introducing certain types of balance statements to the Open Banking journey made participants more likely to prefer Open Banking as a payment method. While certain balance statements made participants less likely to unintentionally go into overdraft and less likely to overestimate their closing balance. However, overall, the vast majority stated that they would like their bank app to display balance statements.

Figure 4: Mobile Open Banking user journey simulation to assess balance statements’ impact on consumer behaviour (Open Banking Implementation Entity)

Advantages of our experimental methodology for informed policymaking

Overall, our experimental approach offers the following advantages:

- Evaluate Prospective Policy Interventions: Policymakers can assess the impact of potential policy interventions on specific digital platforms without needing direct access to these platforms.

- Understand What Works and How: Our methodology not only helps policymakers determine whether a policy intervention is effective but also provides insights into the optimal implementation of such interventions in a digital environment (e.g., placement in the user journey, visual display, feature design).

- Mixed Method Approach: By combining actual user data with survey and open-ended data, our methodology employs a mixed method approach, enabling a comprehensive understanding of why a given intervention works or not.

We believe our new experimental approach has the potential to be helpful in shaping policies within the digital and tech industries. It could be particularly useful in addressing current challenges like online product labeling (such as carbon and reparability labeling) and protecting the mental well-being of users on social media platforms.

Examples of questions our approach could help address:

- Does the presence of a carbon label on products in online marketplaces influence consumers' choices towards more sustainable options?

- What's the optimal design for such a label to achieve the greatest impact?

- Does introducing this new label create any frictions in the online user journey?

- Overall, what is the impact on consumer welfare?

If you or your organisation are interested in experimenting on digital platforms for informing policy decisions, don't hesitate to fill out our Get in Touch form.